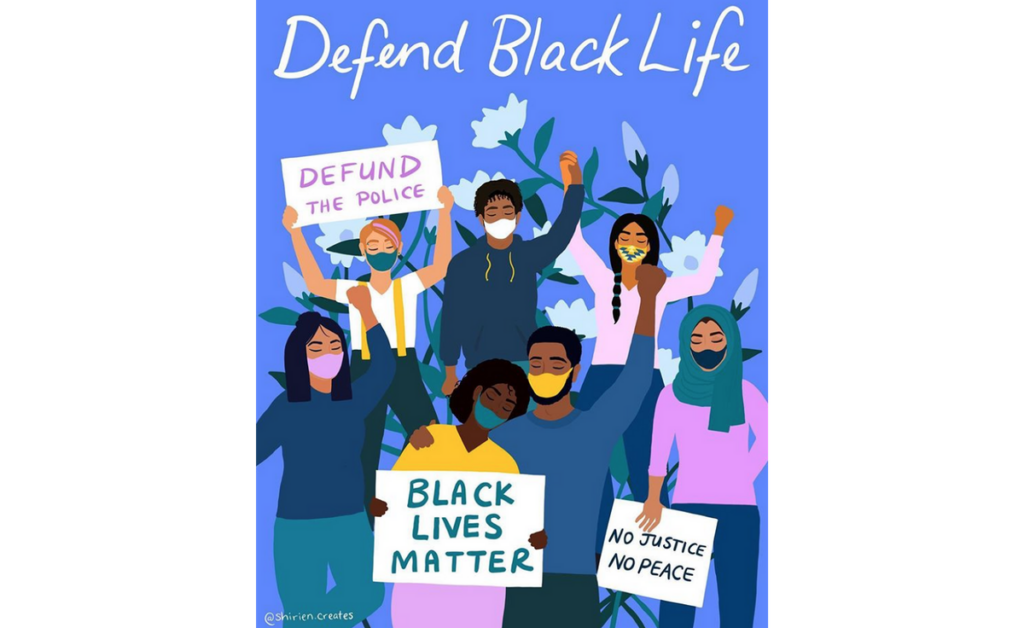

Illustration above by Shirien Creates.

Like so many of you, we’re deeply shaken by the deaths of Breonna Taylor, an EMT who was shot in her home in Louisville, Kentucky; Ahmaud Arbery who was hunted down while out for a run in his own neighborhood in Brunswick, Georgia; Tony McDade who was gunned down by a police officer in Tallahassee, Florida; George Floyd who was killed when an officer pinned him down with a knee to his neck in Minneapolis, Minnesota; and countless other Black Americans who have lost their lives because of generations of institutional racism, manifested as interpersonal actions. Though the particular reality of anti-Black racism in the US is center stage, all the nations in which DataKind works carry fractures and deeply unequal systems.

It goes without saying that our work – and that of our mission-driven partners – is deeply impacted and, in many ways, limited by anti-Black racism. Our frontline health projects are embedded in a healthcare system rife with racial inequity. Our projects related to microfinance or lending skirt at the edges of a legacy of state-sanctioned disenfranchisement of people of color. Moreover, our staff, our Chapter leaders, and our volunteers live every day under threat of a system that gives justice for some, but not for others.

Beyond continuing to identify opportunities for data science and AI to close these and other gaps in access and opportunities, it’s our responsibility to make it clear where we stand. Today, we commit to protecting and defending Black lives. We stand in solidarity with those protesting the brutal murder of Black Americans, and with Black leaders, technologists, volunteers, and community members who fight for racial justice every day.

The issues of racism are far deeper and more human than any algorithm or analysis can hope to alleviate, and we know technology can exacerbate these issues. We need not look far to find an example of racist code or racially biased data. However, we also know we can use data and data science to surface biases and racism and drive for systemic change. As an organization sitting at the intersection of the data science community and the social sector, it’s also our work to undo racism.

Since our founding, a commitment to diversity has been one of our six core values. We know that our work is best when we collaborate across different perspectives, and that equity-centered projects can’t succeed without those most impacted by harm at the table. Beyond our standing commitment to hiring talent from communities historically underrepresented in the tech sector, diversity isn’t enough. We remain committed to defining inclusion and equity within our organization even as we work to realize them in the world around us.

Some of the data scientists in our community have asked what they can do to help at this time. Below is what we recommend.

Within your work: It’s critical that we continue to ask ourselves what implications our analyses and algorithms have for communities of color. There are fantastic resources for better understanding AI, data, and their relation to racial discrimination, and we recommend familiarizing yourself with works like Algorithms of Oppression, Race After Technology: Abolitionist Tools for the New Jim Code, and Automating Inequality.

At DataKind, some of the questions we use to assess the effects of a project before we begin include:

- What’s the change the NGO wants to see? What tradeoffs are acceptable in getting there?

- Who has the power to use and deploy this tool?

- Who has the power to change or turn off this tool?

- What harm could be done if we fail?

- What harm could be done if we succeed?

Within your community: Give money to causes that fight for racial justice and the stop to police violence. Give to Black-owned businesses and movements. If you want to focus particularly on groups using data science and AI or improving racial equity in the field of data science and AI, here are a few:

- Algorithmic Justice League

- America on Tech

- Black Girls Code

- Black in AI

- Campaign Zero

- Center for Policing Equity

- Data for Black Lives

We believe in these causes, and will be donating $25,000 to groups that increase racial equity.

As we work towards internal and external practice that enables us to live these commitments, we know that the work to undo systemic, interpersonal, and internalized racism is both deeply personal and work to do for the long haul, not just this week. As we work to ensure equitable hiring of staff and volunteers and partner with underrepresented communities, we ask that you hold us accountable to being better in the months and years to come. Putting out a statement is easy, but we’ll look to you to make sure we’re living up to our commitment.

At DataKind, our mission is to use data science and AI in the service of humanity, and that statement includes all of humanity. None of us is free until all of us are free, and that’s why it’s imperative that we say loudly that Black Lives Matter and stand by those striving to alleviate the ills of anti-Black racism today and every day.